Following on from the previous experiment on the Cartpole environment, coach comes with a handy collection of presets for more recent algorithms. Namely, Rainbow, which is a smorgasbord of improvements to DQN. These presets use the various Atari environments, which are de facto performance comparison for value-based methods. So much so that I worry that algorithms are beginning to overfit these environments.

This small tutorial shows you how to run these presets and generate the results.

Setting Up Coach

You can follow the same instructions that are in the previous DQN example to setup an environment to work with coach presets.

Training the Agents

To train the agents is as simple as calling the pre-defined coach presets. For example:

export PYTHONUNBUFFERED=1 ; nohup coach -p Atari_DQN -lvl pong -e "pong_dqn" -ep "local/experiments/" --seed 42 --no_summary -s 300 &> coach.out &

The Rainbow version looks very similar:

export PYTHONUNBUFFERED=1 ; nohup coach -p Atari_Rainbow -lvl pong -e "pong_rainbow" -ep "local/experiments/" --seed 42 --no_summary -s 300 &> coach.out &

You can change the game being used to train the agent with the lvl parameter:

export PYTHONUNBUFFERED=1 ; nohup coach -p Atari_Rainbow -lvl ms_pacman -e "ms_pacman_rainbow" -ep "local/experiments/" --seed 42 --no_summary -s 300 &> coach.out &

Copying The Data Off the VM’s

Note that all of these commands put the process into the background. This is because I typically ran a second process to copy the results off the VM into another location, using the rsync command:

nohup watch -n 60 rsync -avzh local/experiments/pong_rainbow/ drive/experiments/pong_rainbow &> rsync.out &

I used a GCP volume to save the results to, using the following commands:

export VOLUME_NAME=your-volume-name

echo "deb http://packages.cloud.google.com/apt gcsfuse-bionic main" | sudo tee -a /etc/apt/sources.list.d/gcsfuse.list

curl https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -

sudo apt -qq update

sudo apt -qq install -y gcsfuse

mkdir drive

/usr/bin/gcsfuse --limit-ops-per-sec=100 $VOLUME_NAME drive

ls drive

Results

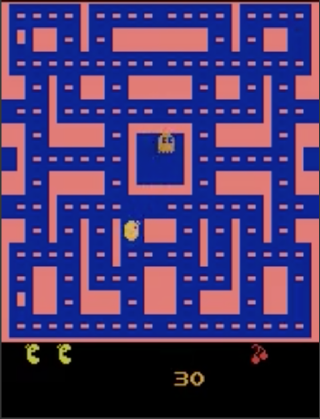

One you have the data then you can plot the results from the CSV files using your favourite plotting tools. Below are two plots showing the results from training DQN and Rainbow agents on the Pong and Ms PacMan Atari environments.

And below are gifs demonstrating the policy of the agents at various time steps. I present this in a table to help organize the two dimensions, but you might need to view these on a PC to see them in a high enough resolution.

Pong Policies

| Amount of Training (environment steps) | DQN | Rainbow |

|---|---|---|

| 0 | ||

| 1 million | ||

| 2 million |

Ms PacMan Policies

| Amount of Training (environment steps) | DQN | Rainbow |

|---|---|---|

| 0 | ||

| 1.5 million | ||

| 3 million |