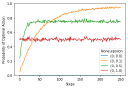

Comparing Simple Exploration Techniques: ε-Greedy, Annealing, and UCB

Phil Winder, Sep 2020

A quick workshop comparing different exploration techniques.

Bandit algorithms are related to reinforcement learning in the sense that they also attempt to optimize a decision based upon a reward. RL generalizes this to sequential decision making but many of the ideas are the same. This means that bandits are a good place to start when beginning learn about generating optimal strategies with reinforcement learning.

Phil Winder, Sep 2020

A quick workshop comparing different exploration techniques.

Phil Winder, Sep 2020

Investigate how altering the epsilon affects exploration and have a quick look at bandit algorithms.